Summarize this article with

A customer logs into their banking app. They pass multi-factor authentication. They confirm the payment.

And minutes later, the money is gone.

In Authorized Push Payment (APP) fraud, the customer authorizes the transaction themselves, often under intense psychological pressure from an impersonation scam, investment scheme, or remote-access “fraud support” call.

That distinction changes everything.

APP fraud exploits trust rather than credentials. It thrives on real-time payment rails, cross-channel interactions, and subtle identity compromises that unfold long before the final transfer is made. By the time the payment occurs, the manipulation has often been building across devices, sessions, and accounts for days or weeks.

For modern banks, the consequences extend far beyond a single fraudulent transaction.

APP fraud now intersects with:

- Mandatory reimbursement frameworks

- Regulatory expectations around anti-money laundering (AML) and suspicious activity reporting

- Public scrutiny of scam-handling outcomes

- Rising operational investigation volumes

- And, critically, customer trust

As real-time payments grow globally, the window to detect and stop scams continues to shrink. Traditional controls, such as login security, multifactor authentication (MFA), and transaction monitoring, were designed to prevent unauthorized access. They’re far less effective when the customer appears to be acting willingly.

This report examines how APP fraud has become a defining risk category in modern banking and why solving it requires more than stronger authentication or post-transaction review. To effectively prevent APP fraud, banks must understand the identity story that unfolds before the payment is made.

The APP fraud era

APP fraud is no longer an emerging threat. It’s now a major loss category in retail banking across the globe.

Historically, most consumer payment fraud has occurred through card fraud, in which stolen card details are used to make unauthorized transactions. Today, a growing number of losses are concentrated in credit transfer fraud, which involves payments sent directly between bank accounts. APP fraud is a form of credit transfer fraud.

In the European Economic Area, fraud involving credit transfers reached €2.5 billion in 2024, accounting for roughly 60% of total payment fraud losses by value. Manipulation of the payer (the regulatory term for APP scams) now represents the leading fraud type in credit transfers. By comparison, card fraud accounted for approximately €1.3 billion.

Although credit-transfer fraud occurs less frequently than card fraud, the average fraudulent credit transfer exceeds €2,000, significantly higher than typical card fraud values. Fewer incidents. Higher impact.

This shift isn’t confined to Europe.

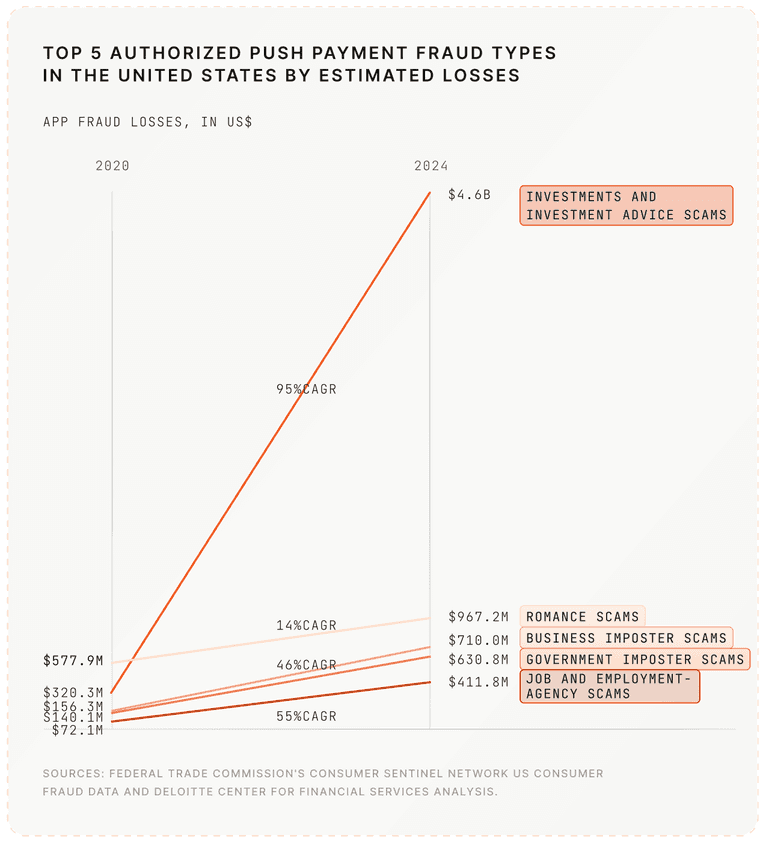

In the United States, APP scams generated more than $2 billion in reported losses in 2023. Across six major real-time payment markets, including the U.S., UK, India, Brazil, Australia, and the UAE, losses are projected to reach $7.6 billion by 2028, with real-time payment adoption acting as a primary accelerant. Industry projections indicate that real-time payment-related app scams will account for the majority of those losses.

The pattern is structural: as real-time payments scale, scam losses scale with them.

The UK shows the financial and consumer impact of APP fraud

The UK provides a clear case study in how APP fraud reshapes financial risk.

In 2024, £450.7 million was lost to APP fraud.

But the financial exposure tells only part of the story.

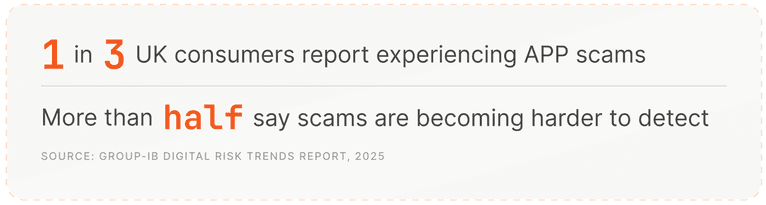

Up to 1 in 3 UK consumers report being victimized by APP fraud, and more than half say it’s becoming harder to detect scam activity.

This level of exposure reflects both the scale and sophistication of the modern scam environment. Public awareness of scams has become widespread in the UK to the point that TV shows such as Scam Interceptors now focus on tracking real-time scam attempts, reflecting the level of public concern around fraud.

The composition of loss reveals deeper structural dynamics:

- 70% of losses originated from crimes committed via online platforms

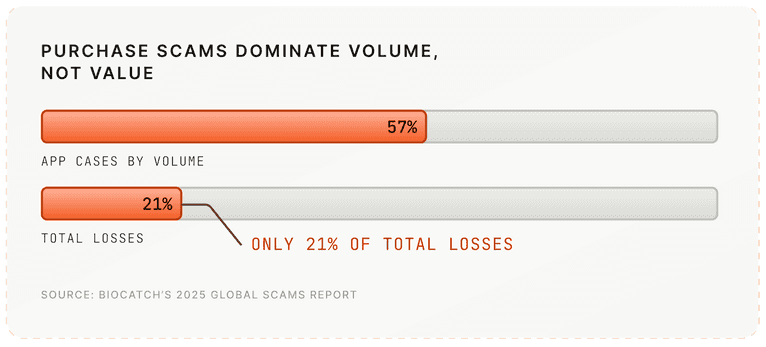

- Purchase scams represented 57% of all APP cases by volume, but only 21% of total losses

- Investment and romance scams accounted for more than 40% of total criminal gains

The distinction between high-volume and high-value scam types has important implications for fraud and risk teams.

High-volume purchase scams result in sustained operational strain. Teams have to manage thousands of cases that may involve manual investigation, reimbursement processing, and customer support costs.

High-value investment and romance scams generate disproportionate financial exposure and reputational risk for their business, with more resultant scrutiny from executives, customers, and the broader public.

Together, these scams create operational strain for fraud teams and financial exposure for institutions, while carrying real human consequences for victims.

Industrialized social engineering

An underlying driver is the explosion in impersonation and social-engineering scams.

Criminals increasingly pose as bank fraud teams, government agencies, suppliers, romantic partners, or investment advisors. These scams are scripted, coordinated, and often supported by call centers, phishing infrastructure, and AI-generated impersonation.

Remote access tools add another layer. Victims are persuaded to install legitimate remote-access software under the guise of fraud protection. Once installed, criminals effectively guide or directly control the transaction process in real time. In high-value “phantom hacker” incidents, remote-access tools are implicated in a substantial share of complex cases.

Device spoofing, emulator usage, and AI-driven identity forgery, including deepfakes and synthetic identity documents, further blur the boundary between legitimate and malicious behavior.

Real-time payment rails leave fraud teams seconds to intervene

The introduction and proliferation of real-time transaction rails have further caused strain on response and recovery action. Faster Payments in the UK, SEPA Instant across Europe, and the expansion of FedNow and RTP in the United States compress intervention windows from hours to seconds.

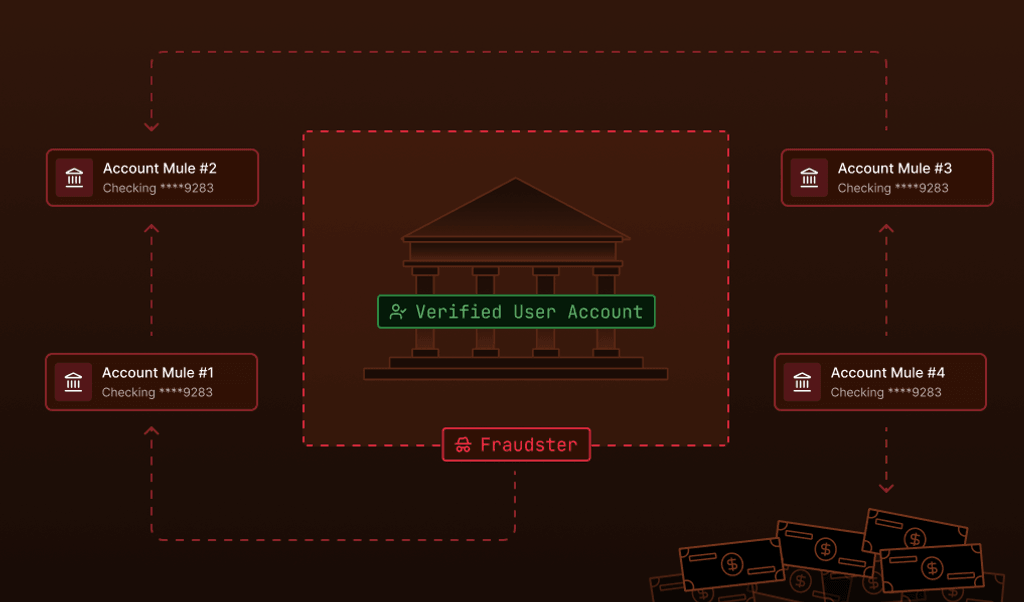

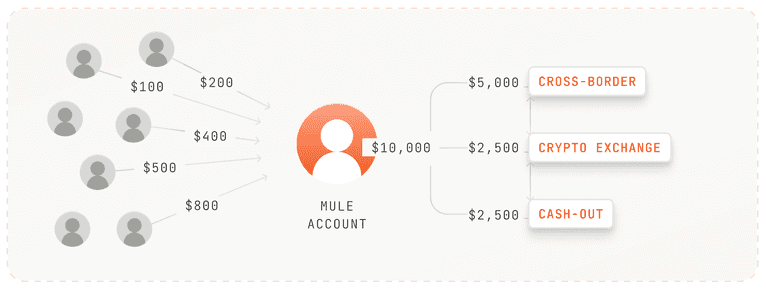

Once funds are transferred, they’re rapidly dispersed through mule accounts, which are often newly opened or lightly vetted accounts used to receive and forward scam proceeds. Often, the scammed funds are routed across borders and jurisdictions within minutes.

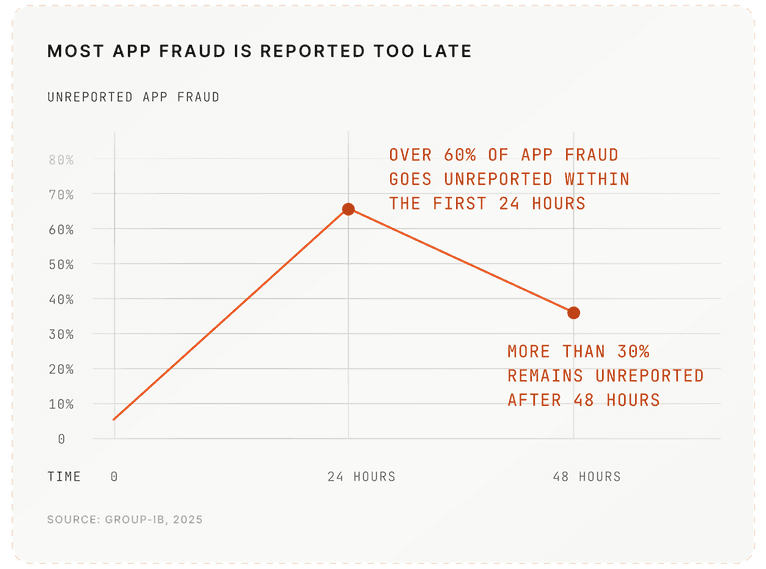

Industry data further indicates that a majority of APP fraud incidents are not reported within the first 24 hours, dramatically reducing recovery prospects.

Reimbursement and regulatory escalation

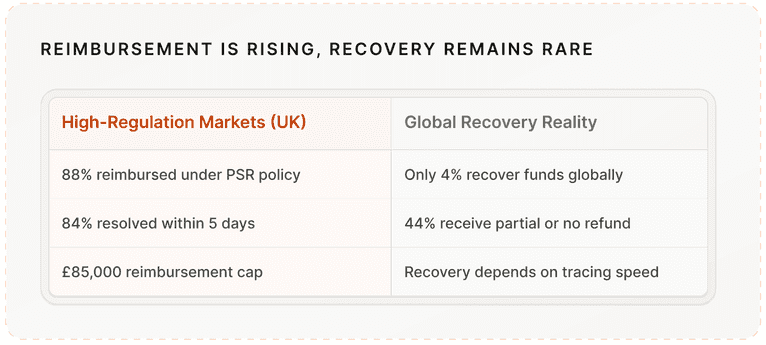

The recovery picture is uneven.

In highly-regulated markets like the UK, mandatory reimbursement frameworks have materially changed victim outcomes. Under the Payment Systems Regulator’s policy, 88% of APP scam losses claimed from payment firms were returned to victims in 2025, with 84% of cases resolved within five days. The reimbursement cap stands at £85,000 per case, with liability shared between sending and receiving institutions.

But reimbursement is not the same as recovery.

Globally, recovery rates remain far lower. Some reports indicate that only 4% of victims successfully recover funds once transfers are complete. Nearly half (44%) of consumers who sought reimbursement received only partial or no refund.

The implication is structural. As network-level recovery remains rare, financial exposure shifts directly onto institutions.

What was once framed as a customer error is increasingly becoming a balance-sheet liability.

Across Europe, fraud is formally recognized as a “predicate offense” under AML legislation, meaning the proceeds of fraud can be treated as money laundering when those funds are moved or concealed. Scam proceeds moving through mule accounts therefore trigger Suspicious Activity Report (SAR) obligations.

Operational strain reflects this environment. In 2023-24, over 870,000 SARs were filed in the UK, near historic highs. Scam-related flows contribute to this sustained volume, increasing pressure across fraud and compliance teams.

In parallel, the Financial Conduct Authority (FCA) has made fighting financial crime a priority in its 2025–2030 strategy, reinforcing enforcement expectations.

APP fraud isn’t just a trust problem. It’s a brand problem.

But the financial loss is only part of the equation. APP fraud is now closely tied to business image and brand credibility.

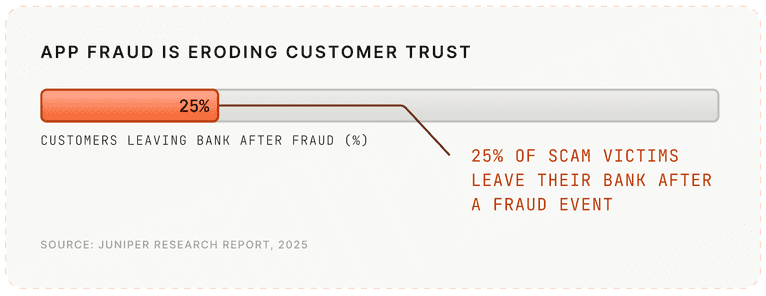

Industry research indicates that 25% of scam victims leave their bank after a fraud event, even when reimbursement is provided.

In digital-first banking, perception of protection directly influences customer retention rates and Net Promoter Score (NPS). Public criticism of scam handling outcomes, particularly in the UK, has increased reputational risk and financial exposure.

Why traditional controls fail APP fraud

Behind each authorized transfer is a longer sequence of device signals, cross-session interactions, and behavioral changes. Yet most traditional controls evaluate these signals in isolation.

APP fraud succeeds not because banks fail to authenticate customers, but because they struggle to connect identity continuity across sessions, devices, and accounts in real time.

The payment isn’t the beginning of the fraud; it’s the endpoint.

The structural failure

Many modern banks and financial institutions have spent the past decade strengthening authentication: stronger passwords, biometrics, multi-factor authentication, and behavioral login scoring.

These controls were built to prevent unauthorized access. APP fraud operates across a different vector: While the customer successfully authenticates, they are manipulated into authorizing the payment themselves. This distinction defines the challenge.

Credentials are not identity

A password, biometric scan, or one-time code confirms that someone can access an account. It doesn’t confirm that the account holder is acting independently, free from manipulation, or under coercion.

In impersonation scams, victims believe they are interacting with legitimate institutions. In remote-access scams, criminals persuade customers to install legitimate software and guide them through authentication in real time.

Authentication succeeds because the customer is real. Systems verify credentials, but don’t verify context.

MFA confirms access, not intent

Multi-factor authentication remains highly effective against classic account takeover.

But APP fraud targets psychology, not passwords.

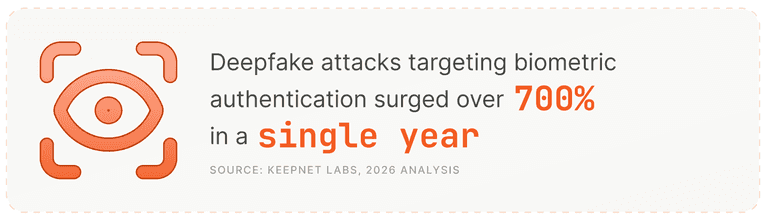

Even advanced identity verification tools are under pressure. AI-enabled document forgery has risen sharply in the past year, with some industry estimates showing increases of more than 240% year-over-year. Deepfake-driven fraud attempts targeting biometric verification increased by over 700% in 2023, illustrating how convincingly identity signals can be simulated.

In many APP scenarios:

- The device appears familiar

- The login behavior is consistent with historical patterns

- The transaction falls within normal thresholds

- No credential stuffing or brute-force attempts occur

From a technical perspective, the session looks legitimate. The manipulation lives outside the authentication layer.

Transaction monitoring happens too late

Most transaction monitoring engines evaluate risk at the moment of payment. By the time a transaction is evaluated:

- The victim may have engaged across multiple channels

- A new payee may have been added under guidance

- Remote-access software may already be active

- Psychological pressure may have escalated for hours or days

Thus, monitoring the transaction alone means assessing the outcome, rather than the environment that produced it.

Signals are fragmented across channels

Modern scam journeys are multi-channel and cross-device.

A customer may interact across:

- Messaging apps

- Mobile banking

- Call centers

- Desktop sessions

Each interaction generates signals. Yet those signals typically reside in separate systems, such as fraud analytics platforms, digital activity logs, AML monitoring tools, and case management tools.

Risk is evaluated in fragments, but scam infrastructure operates as a network. So, without persistent visibility across sessions and devices, identity continuity remains invisible, even while coordinated activity is unfolding.

Investigations rely heavily on manual judgment

Once an APP scam is reported, institutions must determine whether coercion occurred, whether reimbursement obligations apply, whether suspicious activity reporting is required, and whether mule account patterns indicate broader laundering activity.

This reconstruction depends heavily on manual review. Investigators piece together customer statements, session logs, and siloed behavioral data after funds have already moved. In this scenario, manual investigation compensates for the lack of continuous identity visibility.

The friction dilemma: protection vs consumer experience

Retail banks operate under competing pressures. Customers expect instant payments, seamless digital journeys, and minimal friction. Regulators expect proactive intervention, suspicious activity reporting, and reimbursement compliance.

Aggressive transaction blocking introduces friction and false positives. Yet, insufficient intervention risks financial loss and reputational damage.

As a result, many institutions continue to defend login checkpoints while scams exploit identity continuity across sessions, devices, and channels. Banks can authenticate the login, but they can’t reliably see the device story behind the payment. APP fraud doesn’t defeat authentication; it exploits the gap between verified access and verified intent.

Until risk evaluation moves from isolated checkpoints to persistent identity visibility, traditional controls will remain misaligned with how modern scams operate.

Scam networks are sophisticated and operate like distributed systems. APP fraud is highly coordinated, repeatable, and increasingly industrialized, with scam networks organized around infrastructure rather than individuals.

Behind a single authorized transfer, there is often a layered ecosystem:

Mule account supply chains

Mule accounts are recruited, opened, tested, and rotated continuously. Fraudulent transfers are disproportionately cross-border compared to legitimate payments, reflecting structured dispersion rather than random movement. Funds are frequently fragmented and forwarded within minutes, reducing recovery probability. These flows mimic classic money laundering layering techniques.

Device reuse across identities

Fraud operators rarely bind one identity to one device. Virtualized environments allow attackers to reuse device configurations across onboarding attempts, mule access, and coordinated transaction activity. Accounts rotate quickly. Device environments often do not.

Emulator farms enable scale

Emulator farms allow operators to replicate thousands of mobile environments simultaneously. This supports synthetic identity onboarding, mule account provisioning, coordinated victim guidance sessions, and parallel transaction routing.

At the application layer, sessions appear compliant. At the device layer, configuration patterns converge.

Remote-access tool fingerprints

In complex APP scams, remote-access tools introduce a second operator into the session. Industry investigations indicate specific remote-access platforms were implicated in nearly three-quarters of documented remote-control fraud incidents in 2025.

These tools introduce measurable artifacts, such as concurrent control patterns, abnormal input cadence, and tool-specific environmental signatures.

Authentication passes, and the underlying environment persists.

Device-level visibility reveals the coordination

Scam networks rotate accounts rapidly while reusing underlying environments.

Even when behavior looks legitimate at the session level, common technical traces may remain. For example, reused emulator configurations, recurring device attributes across unrelated accounts, remote-access tool artifacts, and cross-account clustering patterns.

If banks evaluate identity only at login or transaction checkpoints, these traces will remain invisible.

When identity is evaluated at the device layer, new capabilities emerge:

- Identify devices, not just accounts: The same virtualized or physical environment operating across multiple identities becomes detectable.

- Link activity across sessions and channels: Device-level continuity allows institutions to connect onboarding, login, payee addition, and transfer activity over time.

- Detect compromised or dual-controlled access: Remote-access artifacts and abnormal control signals can indicate manipulation even when authentication succeeds.

- Surface mule network clustering: Shared device attributes across recipient accounts can reveal structured scam coordination rather than isolated transactions.

APP fraud appears compliant at the credential layer. It often leaves continuity at the device layer. Scam operations spread because the underlying environment scales. Device intelligence makes that infrastructure measurable.

The goldilocks zone of fraud detection: Real-time protection without harming user experience

The future of APP fraud prevention is invisible and adaptive.

Banks can’t solve APP fraud by adding friction to everyone. Yet, as scam losses rise and reimbursement frameworks expand, the instinctive response is often more controls: more step-up authentication, more warnings, more payment delays. But blanket friction creates its own risk. False positives rise. Customer frustration increases. In competitive digital markets, trust erodes as quickly as fraud spreads.

Adaptive friction, not universal friction

When institutions can distinguish between a long-established device with a consistent history, a newly observed virtualized or emulator environment, a session exhibiting remote-access artifacts, and a device linked to prior cross-account activity, they can apply intervention proportionally.

Low-risk sessions move quickly. Elevated-risk sessions slow down. So, instead of forcing every customer through additional verification, banks can reserve step-up authentication, cooling-off periods, or payment review for sessions where device-level risk is evident. Thus, protective measures become precise and timely, rather than a broad, blanket-layer defense.

Risk scoring must happen before the payment screen

With real-time payment systems increasingly in use, intervention windows are measured in seconds. By the time a transfer reaches the confirmation screen, the opportunity to assess context is already narrowing.

For risk evaluation to be effective and timely, it needs to be more proactive; it cannot begin at the moment of payment. It has to build during the session itself.

Persistent device intelligence allows institutions to recognize stable device characteristics before authentication completes. It can detect virtualization or automation environments as they initialize. And it can surface signs of remote access while interaction is still unfolding. It can also connect the current session to prior cross-account device activity — context that rarely appears at the transaction layer alone.

When risk signals are evident earlier in the journey, intervention becomes more measured. High-risk sessions can trigger additional review before funds move, while established, low-risk devices proceed without interruption.

The shift is subtle but important. With the ability for decisioning earlier in the interaction, where context is richer, interventions can be made proactively and with more confidence.

Better signals strengthen existing AI models

Fraud detection is ultimately a modeling problem. And models reflect the quality and depth of the data they receive.

When institutions rely primarily on credential events, payee changes, and transaction thresholds, they’re modeling outcomes. They aren’t modeling the coordinated environment behind them. That limits visibility to what happens at the moment of login or payment, rather than how identity behaves across sessions and devices over time.

Persistent device intelligence expands that field of view.

When device-level continuity is incorporated into existing AI and machine learning systems, institutions gain the ability to connect activity that would otherwise appear unrelated. Patterns emerge across accounts and sessions. Environment reuse becomes measurable. Coordinated behavior can be identified earlier, before it culminates in a high-value and fraudulent credit transfer.

The impact grows over time, as ML models learn and detection accuracy improves. Static rules become less necessary. False positives decline because models are working with richer context rather than blunt thresholds.

Persistent device intelligence for APP fraud prevention

More context doesn’t mean more friction. Persistent device intelligence is seamless to visitors and enables more confident decisions for fraud and risk teams. Over time, that confidence reduces the need for broad transaction warnings and universal step-ups, because legitimate activity and coordinated manipulation become easier to distinguish.

APP fraud succeeds because infrastructure persists across sessions, devices, and accounts. Forward-looking institutions are responding by incorporating persistent device intelligence into their existing fraud models and AI frameworks — enabling earlier detection, more adaptive intervention, and stronger protection without disrupting legitimate customers.

For risk reduction in a real-time payment environment, persistent device intelligence is no longer an optional enhancement — it is now a foundational fraud defense layer.

Want to strengthen your APP scam prevention strategies?

Learn more about how Fingerprint’s device intelligence can help