Summarize this article with

Fraudulent traffic is becoming harder to distinguish from legitimate users. Instead of obvious noisy attacks and suspicious patterns, modern fraud is designed to blend in. Fraudsters rely on manipulated browser environments that rotate fingerprints, spoof device characteristics, and create hundreds of seemingly legitimate identities that are difficult to distinguish from real users.

To address this, we have rolled out enhanced anti-detect browser detection, a major upgrade to Fingerprint’s device intelligence that surfaces sophisticated fraud activity that previously hid inside normal-looking traffic.

Why anti-detect browsers are a problem

Anti-detect browsers are tools designed to mask or manipulate a browser’s fingerprint so each session appears to come from a different person or device.

They are commonly used to: create large numbers of fake “unique” users, evade traditional device fingerprinting, and bypass IP-based rules and basic fraud detection systems.

This makes them especially effective for fraud, such as:

-

Account takeover, where attackers hide while using stolen credentials

-

Multi-accounting, where one operator controls hundreds of accounts

-

Bonus and promotion abuse, where fake identities exploit incentives at scale

Anti-detect browsers are also frequently paired with automation tools to scale these attacks further. A single operator can combine fingerprint rotation with automated scripts to generate thousands of sessions with minimal manual effort. These sessions are indistinguishable from real users to basic detection systems.

What’s changed: deeper detection of browser manipulation

We have significantly expanded our ability to detect intentionally manipulated browser environments, especially those designed to evade traditional defenses.

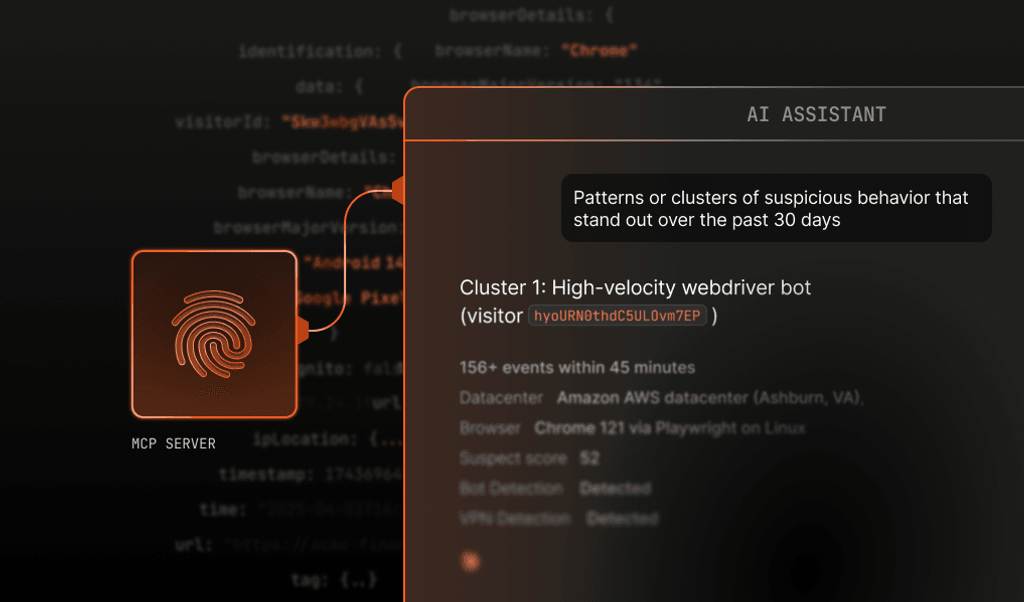

This enhancement adds a deeper layer of browser-level analysis, catching inconsistencies and manipulation patterns that signals such as IP addresses and user agents cannot reliably surface on their own. As part of this update, we’ve introduced a new machine learning layer that expands detection beyond what rules alone can catch. Previously, detection focused primarily on identifying known tools and patterns. Now, detection also includes models trained on real-world browser manipulation behavior, allowing us to surface previously invisible threats.

This means we can detect both known anti-detect tools and unknown or evolving manipulation patterns designed to evade traditional methods.

As a result, detection coverage has expanded significantly:

- Anti-detect browser detection increased from 2.1% → 6.4% (+4.3pp)

- Overall tampering detection increased from 6.5% → 7.8% (+1.3pp)

A meaningful portion of the newly detected anti-detect browser traffic was already being flagged by our anomaly detection systems. This overlap reinforces consistency across signals while still expanding visibility into sophisticated abuse.

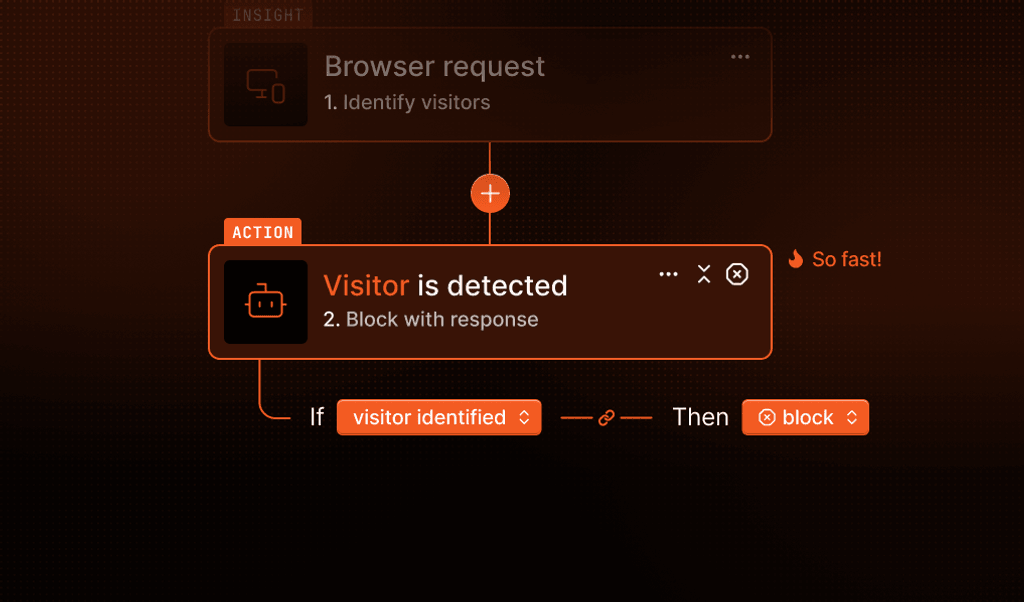

Importantly, all of this happens silently in the background, without added friction.

Better signals, smarter decisions

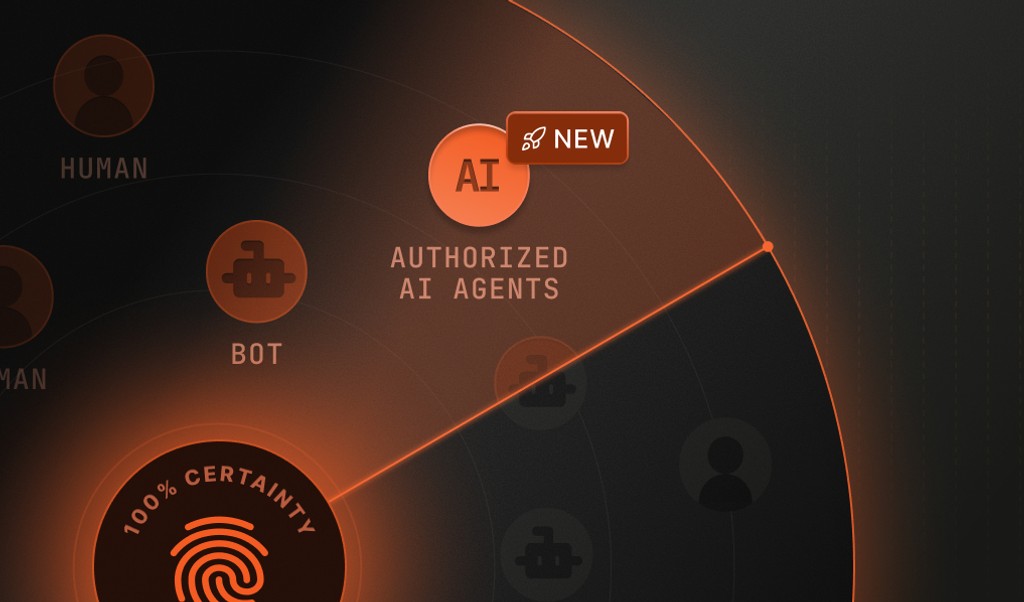

Most teams are not trying to block every unusual browser environment. Legitimate users can appear in many forms, including privacy-focused browsers, corporate networks, and accessibility tools.

The real challenge is distinguishing legitimate users from intentionally manipulated browser environments designed to create synthetic identities at scale.

Teams need to understand which manipulated environments represent real abuse and where to focus investigations, rules, or downstream controls.

By surfacing sophisticated browser manipulation more reliably, Fingerprint helps teams make clearer decisions with cleaner signals, less noise, and fewer false positives.

In addition to improved detection, this update introduces new machine learning–based signals that provide more transparency into risk levels:

tampering_ml_score: a numeric score (0–1) representing the likelihood of tamperingtampering_confidence: a normalized confidence level (e.g., low, medium, high)

These signals help teams move beyond binary decisions and better understand which sessions require action versus monitoring.

Built for the Next Phase of Fraud

This enhancement is part of a broader effort to adapt to how fraud continues to evolve. Attacks are becoming more subtle, identities are increasingly synthetic, and traffic that looks normal on the surface can no longer be trusted by default. As fraudsters invest more in techniques to manipulate browser environments and digital identities, detection needs to go deeper and adapt just as quickly.

Next, we will continue training machine-learning models on newly observed manipulation patterns to further expand coverage and improve accuracy over time. The goal is simple: help you understand and trust your traffic again, even as it becomes harder to tell what is real.

Learn how Fingerprint detects sophisticated browser manipulation.

Explore our anti-detect browser detection documentation to see how it works and how to get started.